The $10/Hour AI Employee: When the Math Actually Works

Section titled “The $10/Hour AI Employee: When the Math Actually Works”At what hourly rate does it make sense to delegate work to an AI agent instead of doing it yourself?

This isn’t a philosophical question. It’s arithmetic.

Running Claude Code autonomously costs approximately $10.42 per hour. That’s measured burn rate over 24 hours of continuous operation using Sonnet. The number comes from practitioners who’ve tracked their actual API spend during overnight coding sessions.

For comparison, a skilled software contractor in the US charges $75–150/hour. A junior developer costs $30–50/hour including benefits. A virtual assistant overseas runs $10–25/hour.

The AI sits at the bottom of that range. And unlike humans, it doesn’t need sleep, doesn’t take breaks, and can run multiple instances simultaneously.

So why isn’t everyone running autonomous AI agents for everything?

Because hourly rate isn’t the only variable that matters.

The Real Calculation

Section titled “The Real Calculation”The mistake most people make is comparing AI cost directly to human cost. That comparison ignores three critical factors.

First, success rate. As I wrote about recently, multi-step AI tasks have compound failure rates. If each step succeeds 95% of the time, a 20-step task succeeds only 36% of the time. You might pay for 10 hours of AI work and get nothing usable.

Second, supervision cost. Autonomous doesn’t mean unsupervised. Someone needs to write the prompt, verify the output, fix the mistakes, and handle the edge cases. That someone is usually you. If you spend 2 hours supervising a 10-hour AI task, your effective hourly cost just changed.

Third, task fit. AI excels at certain work and fails at others. Mechanical tasks with clear completion criteria work well. Creative tasks, judgment calls, and ambiguous requirements don’t. Applying AI to the wrong task burns money without producing value.

The honest calculation isn’t “AI costs $10/hour, humans cost $75/hour, therefore use AI.” It’s:

(AI hourly rate × hours) + (your hourly rate × supervision time) + (cost of failures × failure rate) vs. (alternative cost × hours)

That equation produces different answers for different tasks.

Where the Math Works

Section titled “Where the Math Works”Let me be specific about where autonomous AI actually makes economic sense.

Large mechanical refactors. Converting a codebase from one framework to another. Migrating callbacks to promises. Adding type annotations across hundreds of files. These tasks are tedious, well-defined, and verifiable by automated tests. If the test suite passes, the work is done. The AI can run overnight; you review in the morning.

At $10/hour for 8 hours of work, you’re paying $80 for something that would take a human developer a full day at $400–600. Even if the AI fails and you have to re-run twice, you’re ahead.

Documentation generation with templates. Given a codebase and a documentation structure, generating API docs, README files, or inline comments is straightforward. The output is verifiable by inspection. The failure mode (bad docs) is annoying but not catastrophic.

Test coverage expansion. Writing tests for existing code is mechanical when the code is already written. The AI reads the implementation, generates tests, runs them. If they pass and cover new lines, the task succeeded. If they fail, iterate.

Data transformation and cleanup. Processing logs, reformatting datasets, standardizing CSV structures. Tasks where the input and output are well-defined and the transformation rules are explicit.

The pattern: tasks with clear completion criteria that can be verified automatically.

Where the Math Doesn’t Work

Section titled “Where the Math Doesn’t Work”Equally important: where does autonomous AI waste money?

Exploratory work. If you don’t know what “done” looks like, the AI doesn’t either. Running an autonomous loop on “figure out why this is slow” burns tokens without direction. You need to do the investigation first, then hand off the mechanical fix.

Creative decisions. Design choices, architecture decisions, UX flows. The AI has no taste. It will iterate forever on “make this better” without converging on something good. These tasks require human judgment; there’s no automation shortcut.

Anything with external dependencies you don’t control. If the task requires an API that might be down, a database that might be slow, or a third-party service with rate limits, the AI will burn cycles on failures it can’t fix. External flakiness plus autonomous loops equals wasted money.

Ambiguous requirements. “Add authentication” sounds simple. But what kind? OAuth? Email magic links? Username/password? Passwordless? The AI will make choices. Those choices might not match what you wanted. Now you’re paying for work you’ll throw away.

Anything you’ll need to maintain. Autonomous AI generates code fast. But generated code needs to be understood, debugged, and extended by humans later. If the AI produces something that works but is incomprehensible, you’ve created a maintenance liability. Speed of creation doesn’t offset cost of ongoing confusion.

The Supervision Variable

Section titled “The Supervision Variable”Here’s the part that AI productivity content usually ignores: your time isn’t free.

If you’re a solo founder billing clients at $150/hour, every hour you spend supervising AI work costs $150 in opportunity cost. Two hours of supervision on a $10/hour AI task that runs for 5 hours means you paid $50 in API costs plus $300 in your own time. Total: $350 for a task that might have taken you 4 hours ($600) to do yourself.

That’s still a win, barely. But it’s not the 10x improvement the hype suggests.

The math improves when supervision scales. If you can write one prompt that runs overnight without intervention, and you check results in 15 minutes the next morning, your supervision cost is minimal. The $80 overnight run really did cost approximately $80.

But that scenario requires:

- A well-defined task

- A strong test suite or verification method

- Confidence that failures won’t cascade

- Willingness to throw away failed runs without emotional attachment

Most tasks don’t meet all those criteria. Most tasks require iteration, debugging, course correction. That iteration time is part of the real cost.

The Batch Multiplier

Section titled “The Batch Multiplier”Something changes when you can run multiple AI agents in parallel.

A human can only do one thing at a time. An AI agent can be instantiated repeatedly. If you have 10 independent tasks that each take 8 hours of AI time, you can run them simultaneously and have results in the morning.

That parallelism is the genuine superpower.

The economics: 10 parallel agents × 8 hours × $10/hour = $800 for what would be 80 hours of human work. Even with substantial failure rates, even with supervision overhead, the math works if the tasks are parallelizable and independent.

This is why AI agents shine for batch operations. Processing 50 support tickets. Generating documentation for 20 API endpoints. Running the same refactor across 10 repositories.

The per-task cost matters less than the throughput. You’re not optimizing hourly rate; you’re optimizing calendar time to completion.

For a solo founder, the question becomes: what work do I have that’s parallelizable, mechanical, and verifiable? That work is the candidate for autonomous AI. Everything else stays in the human column for now.

The Subscription Consideration

Section titled “The Subscription Consideration”Most of the hourly rate calculations assume API pricing. But Claude Pro and Claude Max subscriptions exist.

At $20/month (Pro) or $100–200/month (Max), you’re paying a flat rate regardless of usage within limits. The marginal cost of an additional AI task is zero, until you hit rate limits.

This changes the math completely.

If you’re already paying $100/month for Claude Max, running an autonomous loop doesn’t cost $10/hour in API fees. It costs nothing additional, until you exceed your allocation.

The economic question shifts from “is this task worth $80 in API costs?” to “is this task worth my time to set up, given I’m already paying the subscription?”

For heavy users, the subscription model makes autonomous agents essentially free at the margin. The only cost is opportunity cost of your attention.

But there’s a catch. Subscription plans have usage limits. Running Claude Code autonomously for hours will hit those limits faster than conversational use. The flat-rate illusion breaks when you exceed your allocation and fall back to API pricing.

The math: understand your usage patterns, calculate when you’ll hit limits, and decide whether the subscription or API pricing works better for your specific workflow.

The Honest Framework

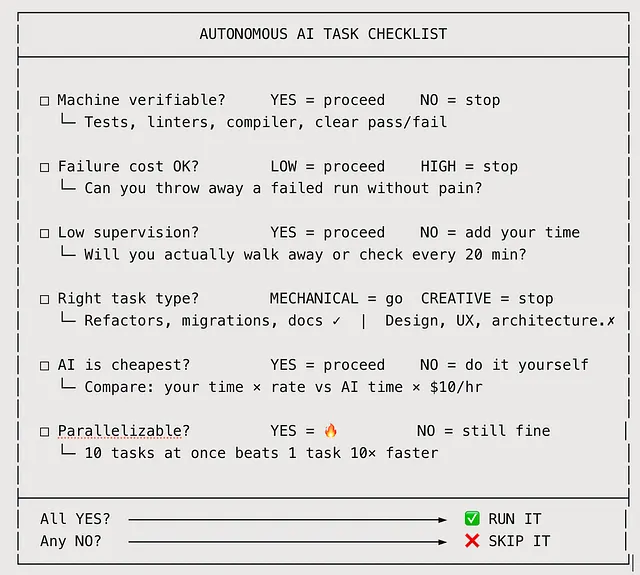

Section titled “The Honest Framework”When deciding whether to use autonomous AI for a task, run through this checklist:

Can a machine verify completion? If success requires human judgment, autonomous loops don’t help. You’ll still need to evaluate the output.

What’s the failure cost? If a failed run wastes 8 hours of compute and produces nothing, can you afford that? Multiply by your expected failure rate.

How much supervision will it need? Be honest. Will you actually walk away, or will you check every 20 minutes? That checking time counts.

Is this the right task for AI? Mechanical, well-defined, verifiable tasks work. Creative, ambiguous, judgment-heavy tasks don’t. Don’t force-fit.

What’s the alternative cost? Your own time, a contractor, a junior hire, not doing it at all. Compare honestly.

Can you parallelize? One 10-hour task has different economics than ten 1-hour tasks running simultaneously. Batch operations are where AI throughput shines.

The Macro Trend

Section titled “The Macro Trend”AI agent pricing is moving toward hourly models because that’s how humans think about labor costs.

Retool published an argument for hourly AI pricing precisely because the token-based pricing model makes cost comparison difficult. When an AI agent costs $10/hour and a human costs $75/hour, the comparison is intuitive.

But intuitive comparisons can mislead. The AI at $10/hour might have a 40% success rate. The human at $75/hour delivers on nearly every task. Effective hourly rate, accounting for failures, might be much closer than the sticker price suggests.

Studies show 20–30% productivity improvements from AI coding tools. Not 10x. Not even 2x. The measured gains are real but modest.

That’s not nothing. A 25% productivity improvement on mechanical work is significant over time. But it’s not “fire your team and replace them with AI.” It’s “augment your workflow with AI for specific task types.”

The $10/hour AI employee exists. It just has a very specific job description.

What I Actually Do

Section titled “What I Actually Do”For transparency, here’s how I think about AI agent economics in my own work.

Tasks I delegate to autonomous AI:

- Formatting and restructuring (data transformation, document conversion)

- Adding boilerplate (tests for existing functions, documentation scaffolding)

- Batch processing (the same operation across many files)

- First-draft generation (knowing I’ll edit heavily)

Tasks I keep for myself:

- Anything requiring design decisions

- Debugging novel problems

- Work that depends on external context the AI doesn’t have

- Anything I’ll need to explain to someone else later

The split isn’t about AI capability. It’s about which tasks have the completion criteria and verification methods that make autonomous operation sensible.

For me, maybe 20% of my work fits the autonomous AI category. That 20% gets dramatically cheaper and faster. The other 80% benefits from AI assistance but not AI autonomy.

Your ratio will differ based on what kind of work you do.

The Bottom Line

Section titled “The Bottom Line”Running Claude autonomously costs roughly $10/hour. That’s cheaper than any human alternative for the tasks where AI works.

But “works” is load-bearing. The tasks have to be mechanical, well-defined, and verifiable. Failure rates compound for complex tasks. Supervision costs add up. The wrong task fit wastes money.

The $10/hour AI employee is real. It has a narrow job description. Within that description, the economics are compelling.

Outside that description, you’re paying for compute cycles that don’t produce value.

Know which tasks fit before you start the meter.

What tasks have you successfully delegated to autonomous AI? I’m interested in the specific workflows, not the general categories.

Tech person. I write about technology, Generative AI, the cloud, design and development.

More from Marco Kotrotsos

Section titled “More from Marco Kotrotsos”Recommended from Medium

Section titled “Recommended from Medium”[

See more recommendations